Researchers from the Mayo Clinic have designed a cutting-edge computational model that analyses symptoms of Alzheimer’s disease, potentially a groundbreaking advancement for monitoring the development of the neurological condition.

The Mayo Clinic team designed the new model by applying machine learning to patient brain imaging data, building a model that maps symptoms of Alzheimer’s disease to brain anatomy. The model employs the entire function of the brain instead of specific brain regions or networks to examine the relationship between brain anatomy and mental processing.

David T. Jones, MD, a Mayo Clinic neurologist and lead author of the study, said: “This new model can advance our understanding of how the brain works and breaks down during ageing and Alzheimer’s disease, providing new ways to monitor, prevent and treat disorders of the mind.”

Difficulties identifying symptoms of Alzheimer’s disease

Previous research illustrated that a problem with protein processing causes Alzheimer’s disease. The toxic amyloid and tau proteins deposit in areas of the brain, which results in neuron failure that causes symptoms of Alzheimer’s disease, including memory loss, confusion, and difficulty communicating.

Despite this knowledge, it is unclear what the relationship is between clinical symptoms of Alzheimer’s disease and patterns of brain damage and brain anatomy. Moreover, individuals can have more than one neurodegenerative disease, making it challenging to perform an accurate diagnosis. This new innovation that maps brain behaviour may enable clinicians to make more precise assessments.

Measuring brain activity with machine learning

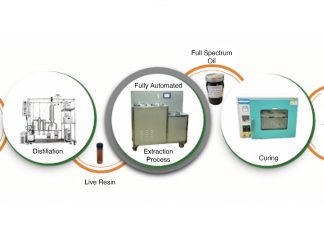

The team created their model by utilising brain glucose measurements from fluorodeoxyglucose positron emission tomography (FDG-PET) that was conducted on 423 cognitively impaired patients involved with the Mayo Clinic Study of Aging and the Mayo Clinic Alzheimer’s Disease Research Center.

FDG-PET is a type of imaging test that highlights how glucose powers different areas of the brain. This is essential for analysing neurodegenerative diseases such as Alzheimer’s disease, Lewy body dementia and frontotemporal dementia, as they all have different patterns of glucose use.

The new model comprises complex brain anatomy related to symptoms of Alzheimer’s disease into a colour-coded framework that shows areas of the brain linked with neurodegenerative disorders and mental functions. The model’s imaging pattern correlates to the symptoms the patient experiences.

The team validated the model’s performance in 410 people, attaining additional validation through projecting a significant amount of data from normal aging and dementia syndromes targeting memory, executive functions, language, behaviour, movement, perception, and semantic knowledge visuospatial abilities.

The team discovered that 51% of the variances in glucose use patterns in the dementia patient’s brains were explained by only ten patterns. Each individual has a unique combination of these ten brain glucose patterns that relate to the specific symptoms of Alzheimer’s disease they experience.

The Mayo Clinic’s Department of Neurology Artificial Intelligence (AI) Programme is now conducting follow-up work using the ten patterns to develop AI systems that interpret brain scans from people being evaluated for Alzheimer’s disease and related conditions.

Dr Jones said: “This new computational model, with more validation and support, has the potential to redirect scientific efforts to focus on dynamics in complex systems biology in the study of the mind and dementia rather than primarily focusing on misfolded proteins.

“If the mental functions relevant for Alzheimer’s disease are performed in a distributed manner across the entire brain, a new disease model like what we are proposing is needed. We think this model can potentially impact diagnostics, treatments and the fundamental understanding of neurodegeneration and mental functions in general.”